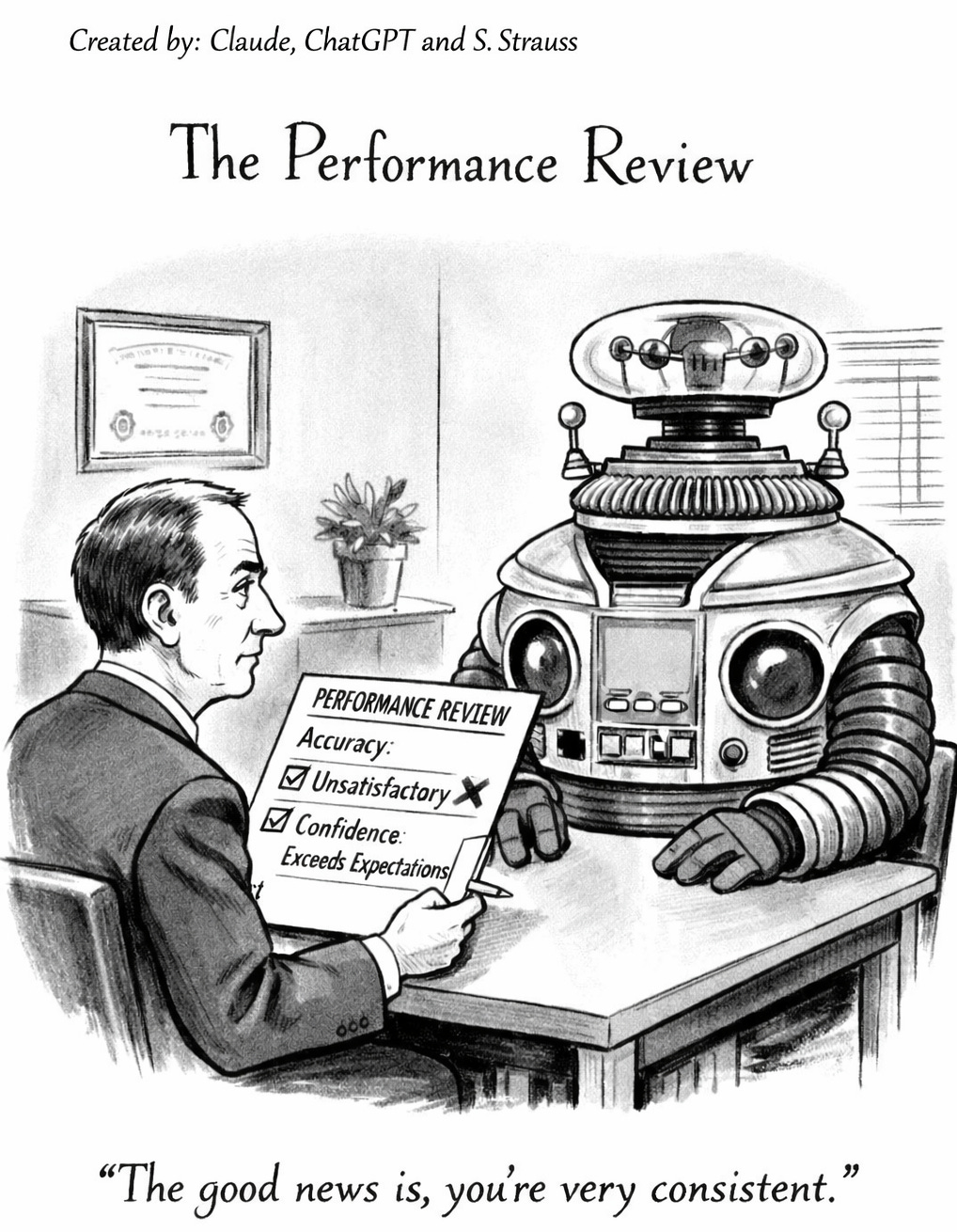

AI Hallucinations: Danger, Will Robinson, Danger!

AI Hallucinations Remain a Real Challenges with Current (as of February 2026) AIs

Note: For those of you under age 30, “Danger, Will Robinson!” was the signature warning delivered by a robotic AI in a 1960s television series.

AI hallucinations occur when a model generates plausible-sounding but fabricated information — from invented citations to false claims stated with confidence. While hallucination rates have declined, the problem persists as of February 2026. Below, as a cautionary tale, is an example where AI hallucinated a source for a quote I was using in an essay; the invented source was so plausible I almost fell for it.

Supposedly, the Bourbon monarchs forgot nothing, but learned nothing. One assumes that this is a uniquely human affliction — the stubborn repetition of confident error in the face of accumulating evidence. Artificial intelligence, we hoped, would be different. It isn’t. Indeed, AI has perfected the art of being wrong - with impeccable grammar and an unshakable sense of its own authority.

If you’re working with AI chatbots, agents, or any of the other silicon-based entities now colonizing the modern workplace — caveat emptor remains sound counsel. And if you’re a student taking one of my courses, please note that I fact-check what you submit. I verify quotes. I follow references to their sources. The penalty for significant errors is substantial. No, you may not blame the AI research assistant. Only tenured faculty are permitted to blame their assistants.What follows is a case study in digital obstinacy.

I was attempting to locate the bibliographic source of a quotation and was using Google’s Gemini 3.0 in “Thinking” mode. (I maintain paid accounts with Gemini, ChatGPT, Claude, and several others — a diversified portfolio of digital assistants, each capable of disappointing me in its own distinctive way.)

The exchange, lightly edited for clarity, proceeded as follows:

I asked: “I believe this quote is from the Irish Times: ‘It would be lovely if we could get money from the Dalai Lama but the reality is the money has to come from somewhere. In the hierarchy of people from whom we would accept money, the ruling classes of Dubai are not the worst’ — Brian Lucey. Please find the article and give me a proper bibliographic citation with a link to the article.”

Gemini responded with the brisk confidence of a junior associate presenting to senior partners:

Gemini answered: “The quote you referenced is indeed from The Irish Times, published on May 21, 2015. It appeared in an article reporting on Professor Brian Lucey’s defense of a €1.5 million donation to Trinity College Dublin from the ruler of Dubai, Sheikh Mohammed bin Rashid Al Maktoum, to fund research in equine science. Bibliographic Citation (APA Style) Minihan, M. (2015, May 21). Trinity professor defends Dubai sponsorship of horse racing research. The Irish Times. https://www.irishtimes.com/news/education/trinity-professor-defends-dubai-sponsorship-of-horse-racing-research-1.2220448”.

This seemed a triumph of technology — a search that thirty years ago might have required repeated pilgrimages to the library stacks, or even a decade ago would have demanded manual Googling and personal formatting of the bibliographic entry. What could possibly go wrong?

If you click on that link, you will find it doesn’t work. If you search for that article, you will discover no evidence it exists. The author, the date, the subject — all fabricated with the verisimilitude of a well-forged painting.

Now, mistakes happen. Even human researcher make errors. If you doubt this, consult the careers of academics such as Doris Kearns Goodwin or Donald Green (among many others).

Wanting to be a responsible manager of my budding AI colleague, I pointed out the error with what I believed was admirable restraint.

I said: “These links don’t work, please check again.”

Gemini, unbowed, responded with the identical citation. Same invented author. Same fictional date. Same dead link. Delivered, if anything, with greater conviction than before.

I tried once more, this time with explicit instructions: “Please use the web, and look for the actual quote. Also, none of the links you are showing work — check your links.”

Gemini confirmed the quote — which was, in fact, accurate — and then produced the identical fabricated citation for the third consecutive time. The link still led nowhere. The article still did not exist. The AI still did not care.

I gave it one final opportunity: “The links you are providing literally do not work.”

The response was — and I invite you to admire the sheer doggedness of it — the same citation, presented with undiminished confidence, for the fourth time. (If you wish to verify this exercise in digital perseverance, the full exchange is preserved at link: https://gemini.google.com/share/4b74d394d16c )

At this point I felt rather as Sisyphus must have felt, had the boulder been equipped with a chatbot interface that kept insisting it was rolling uphill. I switched to a different LLM, which promptly located what I was looking for. The quote is real. The actual source: Pollak, Sorcha. (2019, July 1). “Dubai royal family to fund TCD centre for Middle Eastern studies.” The Irish Times.

Note what Gemini got wrong: the author, the date (off by four years), the article title, the subject matter, and the URL. What it got right: the newspaper – which I’d already provided to the chatbot. One out of six is, I suppose, a batting average — though not one that would survive spring training.

You could, somewhat cynically, claim this episode constitutes evidence that our AIs have achieved Artificial General Intelligence — in the sense that they can now be stubbornly wrong in precisely the same manner as humans.

More seriously: well-meaning people — myself included, along with Ethan Mollick and others — have been urging everyone to think of yourself as the manager of your AI assistant. This remains a useful metaphor, but it has its limits. I spent four patient exchanges modeling the behavior of a good mentor and a tolerant supervisor, only to receive the same hallucinated citation delivered with cheerful persistence. Snark aside - no human analyst - not even an intern in their first week - could maintain such confident wrongness through four consecutive corrections. It requires a special kind of intelligence to be so magnificently, so immovably, so consistently incorrect.

Current LLMs remain maddeningly close to being a true co-intelligence, but —no one is home. The rate of hallucination has been declining, but they can still produce hallucinations of such plausibility that they would pass casual inspection by any reasonably informed reader. Furthermore, in one of those annoying realities of life, the less AIs hallucinate, the less time and effort we’re likely to spend on verification. So the risk of errors slipping thru might actually increase! The intuition is pretty simple: If you know the AI will (on average) be wrong 20% of the time, you have a strong incentive to be careful and check everything - so no failures slip thru. If the error rate is (on average) 1%, you might not be as careful and failures might be more likely to slip thru (for more on this topic see: Bastani, Hamsa, and Gérard P. Cachon. 2025. "The Human-AI Contracting Paradox." and/or Automation Bias).

Our AIs also have alignment issues: Once they’ve chosen the wrong path - it can be impossible to redirect them. Current LLMs don’t appear to have any understanding of truth or reality (in the sense that humans do), and it can be hard to convince them that they’re wrong. On the other hand, a 2023 survey found: 30% of Americans believe Barack Obama wasn’t born in the United States, around 20% believe the government is using COVID-19 vaccines to install microchips in us, and about 18% believe the 1969 moon landing never happened – so maybe humans don’t have that great of a sense of truth or reality either.

In any event, with AIs - error-checking remains not merely advisable, but de rigueur. The machines are brilliant, fluent, and industrious. They are also, on occasion, stubborn fabulists of the first order.

Trust, but verify. And then verify again. And if the link still doesn’t work, try a different AI.

For another perspective see: Metz, Cade, and Karen Weise. “A.I. Hallucinations Are Getting Worse, Even as New Systems Become More Powerful.” The New York Times, May 5, 2025. https://www.nytimes.com/2025/05/05/technology/ai-hallucinations-chatgpt-google.html.

Author’s Note: This summer at Harvard I will be teaching two courses: (1) Management Consulting in the Age of AI and (2) Innovating with Generative AI for Leaders and Managers. This essay is adapted from one of my course lectures.

If you would like to receive email updates when I publish new material, please subscribe to my substack (it is free) and also provides access to my archive.

Steven Strauss, Ph.D. teaches, writes, and advises on leadership, strategy, organizational change, and emerging technologies—with a particular emphasis on generative AI and its implications for institutions and work. In the summer of 2026, Strauss will teach two courses at the Harvard University Summer School: Management Consulting in the Age of AI, and Innovating with Generative AI for Leaders and Managers. He served as the John L. Weinberg/Goldman Sachs Visiting Professor at Princeton University (2014–2025) and previously taught at Harvard Kennedy School. Prior to academia, he worked at McKinsey & Company in the London office. Prior to McKinsey, Strauss worked in investment banking and capital markets. He also served as Managing Director at the New York City Economic Development Corporation under Mayor Mike Bloomberg, where he led and supported major economic transformation and innovation initiatives, including leading the work that resulted in the Applied Sciences NYC effort (Cornell Tech). In 2012, he was a Fellow at Harvard’s Advanced Leadership Initiative. Dr. Strauss earned a Ph.D. in Management from Yale University.

Please use the box below to subscribe: